The 2026 AI Infrastructure Roadmap: How to Move from Pilot Projects to Profitable Production

You have a functional model. It works beautifully in a Jupyter notebook. The results are promising, the stakeholders are excited, and the initial pilot proves that generative AI can solve the business problem.

Then, you try to deploy it.

Suddenly, you are hit with latency issues. Inference costs spiral out of control. The data pipeline that worked for 100GB chokes on 10TB. Security compliance flags the deployment, and your engineering team realizes they don’t have the tooling to monitor model drift in real time. This is the “pilot purgatory” where the vast majority of enterprise AI initiatives die.

As we look toward 2026, the differentiator between market leaders and failed initiatives isn’t the quality of the algorithm—it’s the robustness of the AI infrastructure roadmap. Moving from a sandbox environment to a scalable, profitable production system requires a fundamental shift in architecture, operations, and mindset.

This guide outlines the critical stages for evolving your stack from a fragile experiment to a resilient enterprise AI architecture.

Stage 1 – AI Pilot Phase: Fast Experimentation

In the early days, speed is the only metric that matters. AI pilot projects are designed to validate hypotheses, not to withstand high-concurrency traffic. At this stage, your infrastructure should be lightweight and flexible.

Proof-of-concept goals

The primary goal is to prove business value with minimal engineering overhead. Avoid building custom infrastructure here. Leverage managed services (like AWS SageMaker or Azure ML) that allow data scientists to spin up resources without waiting for DevOps support.

Tool selection

Choose tools that lower the barrier to entry. For an AI proof of concept, prioritize Python-based frameworks and pre-trained models (foundation models) via APIs. Hard-coding complex infrastructure here is premature optimization.

Success metrics

Do not measure success by latency or cost-per-query yet. Focus on model accuracy, user acceptance, and the feasibility of the solution. If the model doesn’t solve the problem, the infrastructure behind it is irrelevant.

Stage 2 – Production Readiness Assessment

Once the pilot is validated, you must hit the brakes before hitting the gas. You cannot scale a prototype. You need to conduct a rigorous audit to determine AI production readiness.

Technical debt review

Prototype code is often “spaghetti code.” It lacks error handling, logging, and modularity. Before moving forward, refactor the codebase. Hard-coded paths, manual data dumps, and lack of version control must be eliminated.

Data quality audit

A model is only as good as the data it consumes. In production, data streams are messy and unpredictable. Audit your data sources for consistency, completeness, and freshness. If your pilot relied on a static CSV file, you are not ready for production.

Security baseline

Security cannot be an afterthought. Implement an AI deployment checklist that covers access controls (RBAC), encryption at rest and in transit, and vulnerability scanning for container images.

Stage 3 – Scalable AI Infrastructure Design

This is where the heavy lifting begins. Designing a scalable AI infrastructure requires balancing performance requirements with budget constraints.

GPU vs CPU workloads

Not every inference task requires a heavy-duty H100 GPU. Analyze your workload. Can you run inference on CPUs? Can you use quantized models to reduce hardware requirements? Allocating the wrong compute resources is the fastest way to burn through your budget.

Cloud vs bare metal

For most enterprises, a hybrid approach works best. Cloud offers elasticity for bursty workloads, while on-premise or bare metal solutions can offer better economics for steady-state training or inference at a massive scale. Your AI infrastructure architecture must support the portability of workloads across these environments (often achieved via Kubernetes).

Network throughput

Large language models (LLMs) and computer vision systems move massive amounts of data. Ensure your network architecture supports high throughput and low latency. Bandwidth bottlenecks will starve your expensive GPUs, leaving them idle while they wait for data.

Stage 4 – MLOps and Automation Pipeline

Manual deployments are the enemy of reliability. To scale, you must implement a robust MLOps pipeline that treats machine learning artifacts with the same rigor as software code.

CI/CD for models

Implement Continuous Integration and Continuous Deployment (CI/CD) for your machine learning workflows. When a data scientist pushes code or a new model version, automated tests should run to validate performance before it touches production infrastructure.

Model versioning

You need to know exactly which version of a model was running in production at 3:00 PM last Tuesday. Tools like MLflow or Weights & Biases are essential for tracking experiments, parameters, and model lineage.

Rollbacks

Model deployment automation must include safety valves. If a new model version degrades performance or introduces bias, your system needs to automatically roll back to the previous stable version without downtime (using canary or blue/green deployment strategies).

Stage 5 – Data Engineering and Pipeline Scaling

Your model needs a constant, reliable diet of data. Building robust data pipelines for AI ensures that your models are fed accurate information without manual intervention.

Data ingestion

Move away from batch processing if your use case demands real-time insights. Implement event-driven architectures (using Kafka or similar tools) to ingest streaming data.

Feature stores

As you scale, different teams will likely use the same data features (e.g., “customer churn risk score”) for different models. A feature store architecture creates a centralized repository for these metrics. This prevents teams from re-engineering the same data pipelines and ensures consistency across the organization.

Streaming pipelines

For applications like fraud detection or dynamic pricing, data needs to be processed in milliseconds. Invest in streaming infrastructure that can transform raw data into model-ready features in real time.

Stage 6 – Observability, Monitoring, and Reliability

Traditional software monitoring checks if a server is online. ML observability checks if the server is smart. A model can return a 200 OK status code while producing completely garbage predictions.

Latency monitoring

Track the time it takes for a request to travel from the user, through the API gateway, to the model, and back. Set strict Service Level Objectives (SLOs) for inference latency.

Drift detection

Data changes over time. If your model was trained on 2023 data, it might fail to understand 2026 consumer behavior. Implement AI monitoring tools that detect data drift (input data changing) and concept drift (the relationship between inputs and outputs changing).

Cost tracking

Associate infrastructure costs with specific models or business units. If a specific model costs $5,000 a day to run but generates only $500 in value, you need to know immediately.

Stage 7 – Security, Compliance, and Governance

As AI regulations tighten globally, your infrastructure must enforce compliance by design. An AI governance framework is no longer optional.

Data privacy

Ensure that Personally Identifiable Information (PII) is masked or redacted before it enters the training pipeline. Implement strict access controls so data scientists only see the data they need.

Model governance

Maintain an audit trail of how decisions are made. If a credit approval model denies a loan, you must be able to explain why. AI security best practices include securing the model endpoints to prevent adversarial attacks or model inversion.

Regulatory compliance

Stay ahead of frameworks like the EU AI Act or GDPR. Your infrastructure should allow for the “right to be forgotten,” meaning you may need the ability to retrain models to remove specific user data.

Stage 8 – Cost Optimization and Unit Economics

The cloud bill is often the shock that kills AI projects. AI cost optimization must be a continuous process, not a quarterly review.

GPU utilization

GPUs are expensive assets. If they are utilized at 30%, you are wasting money. Use virtualization technologies or GPU partitioning (like NVIDIA MIG) to share resources across multiple workloads.

Spot vs reserved capacity

For training workloads that can be interrupted, use Spot instances to save up to 90%. For steady-state inference, commit to reserved instances.

ROI tracking

Shift the conversation from “total cost” to “unit economics.” How much does it cost to generate one summary? To process one image? This granularity helps you reduce AI infrastructure costs by identifying exactly where the inefficiencies lie.

Stage 9 – Organizational Scaling and Operating Model

Technology is easy; people are hard. A successful enterprise AI transformation requires an operating model that bridges the gap between data science and operations.

Platform engineering

Don’t force data scientists to become Kubernetes experts. Build an internal developer platform (IDP) that abstracts the complexity of the infrastructure. This allows data scientists to self-serve compute resources within guardrails defined by the platform team.

Talent readiness

Upskill your existing DevOps teams to understand the unique requirements of AI workloads. An AI operating model relies on cross-functional collaboration between data engineers, ML engineers, and site reliability engineers (SREs).

DevOps maturity

Apply standard DevOps principles—infrastructure as code (IaC), automated testing, and immutable infrastructure—to your AI stack.

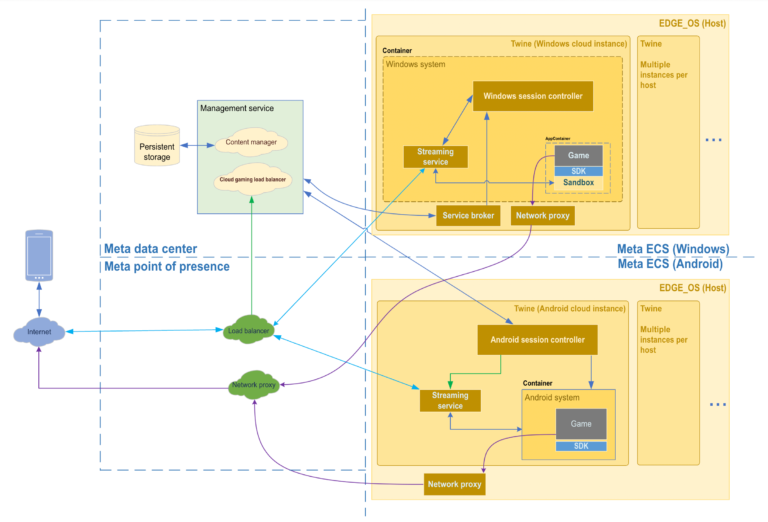

Reference Architecture Example (End-to-End AI Stack)

To visualize this, a standard robust stack for 2026 might look like this:

- Compute Layer: Hybrid mix of Kubernetes (EKS/GKE) for orchestration and on-premise GPU clusters for heavy training.

- Data Layer: Snowflake or Databricks for data warehousing, combined with a feature store like Feast.

- MLOps Layer: MLflow for tracking, Kubeflow for orchestration, and ArgoCD for deployment.

- Serving Layer: NVIDIA Triton Inference Server or TorchServe for high-performance model serving.

- Observability Layer: Prometheus/Grafana for metrics, combined with specialized tools like Arize AI for drift detection.

Common Mistakes When Scaling AI to Production

Overengineering

Building a massive Kubernetes cluster for a model that receives 10 requests an hour is wasteful. Scale the infrastructure to match the actual demand, not the hypothetical demand.

Ignoring governance

Launching a model without compliance checks invites lawsuits and reputational damage. Governance must be a gatekeeper in the deployment pipeline.

Vendor lock-in

Relying entirely on proprietary tools from a single cloud provider makes it difficult to negotiate costs or move workloads. Prioritize open-source standards and containerization to maintain leverage.

12-Month AI Infrastructure Roadmap Template

- Q1: Assessment & Foundation: Audit current pilots. Establish the AI deployment checklist. Select cloud/compute partners.

- Q2: Pipeline Automation: Build the MLOps pipeline. Implement CI/CD. Set up the feature store.

- Q3: Scalability & Observability: Stress test the architecture. Implement AI monitoring tools. Define auto-scaling policies.

- Q4: Optimization & Governance: Focus on AI cost optimization. Conduct security audits. Finalize the AI governance framework.

FAQ – AI Infrastructure Roadmap (High-Intent SEO)

Q1: What is an AI infrastructure roadmap?

An AI infrastructure roadmap is a strategic plan outlining the hardware, software, processes, and talent required to move artificial intelligence initiatives from experimental pilots to scalable, reliable, and secure production systems.

Q2: How long does it take to move AI from pilot to production?

While timelines vary, a typical enterprise moves from pilot to production in 6 to 12 months. This includes time for infrastructure setup, security auditing, pipeline automation, and stress testing.

Q3: What infrastructure is best for production AI workloads?

The “best” infrastructure is usually a hybrid model. It typically involves container orchestration (like Kubernetes) for flexibility, high-performance object storage, and a mix of cloud-based and on-premise GPUs depending on cost and latency requirements.

Q4: How do companies control AI infrastructure costs?

Companies control costs by using spot instances for training, optimizing model size (quantization), sharing GPU resources via partitioning, and implementing strict budget alerts and FinOps practices to track unit economics.

Q5: What tools are required for MLOps at scale?

Scaling MLOps requires tools for version control (Git, DVC), experiment tracking (MLflow, Weights & Biases), orchestration (Kubeflow, Airflow), model serving (Triton, Seldon), and observability (Arize, Fiddler).

Q6: Why do AI pilots fail in production?

Pilots fail because they are built for functionality, not non-functional requirements. They often lack scalability, fail to handle real-world data drift, become too expensive to run, or cannot meet security and compliance standards.

Conclusion

The journey to 2026 is not about discovering a magic algorithm; it is about building the factory that can run that algorithm reliably, securely, and profitably. Moving from pilot to production is a discipline that requires rigorous engineering and a clear vision.

The organizations that win in the AI era will be those that view infrastructure not as a utility, but as a strategic asset. Start auditing your pilots today. Formalize your AI infrastructure roadmap. The gap between experimentation and value is bridged by the choices you make in your stack right now.