Agentic AI Hosting in 2026: Why Your 2025 Architecture Can’t Support Autonomous Agents

The shift from generative AI to agentic AI represents the single largest architectural inflection point since the migration from on-premise data centers to the cloud. While 2024 and 2025 were defined by Large Language Models (LLMs) that waited for user prompts, 2026 is shaping up to be the year of the autonomous agent—software that thinks, plans, and executes tasks independently.

This transition isn’t just a software update. It is a fundamental reimagining of how compute resources are consumed. Legacy server architecture designed for standard web applications—or even static LLM inference—is rapidly becoming obsolete. The unpredictable, bursty, and highly parallel nature of agentic workflows breaks traditional scaling rules.

If your infrastructure strategy relies on the static provisioning models of 2025, you are already accumulating technical debt. This guide explores the critical infrastructure gaps facing modern enterprises and outlines the blueprint for a 2026-ready architecture capable of supporting the next generation of autonomous intelligence.

What Is Agentic AI and Autonomous Agents?

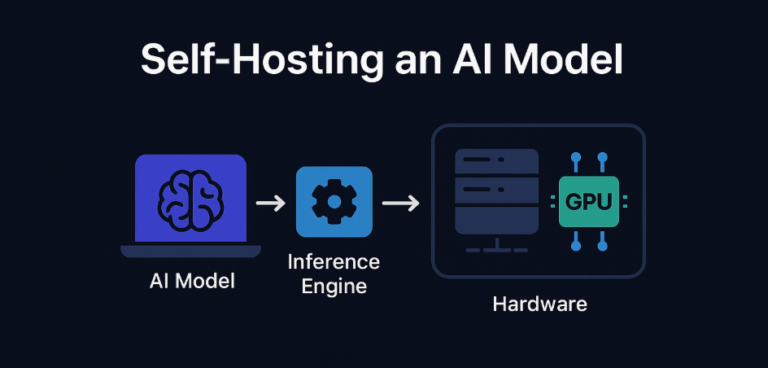

Before dissecting the infrastructure, we must define the workload. Agentic AI refers to systems capable of autonomous decision-making and action execution to achieve high-level goals. Unlike a chatbot that responds to a query and stops, an autonomous agent observes an environment, reasons about the best course of action, uses tools (APIs, browsers, code interpreters), and iterates until the objective is met.

This requires real-time autonomy. The system isn’t just retrieving data; it is maintaining state, managing a “memory” of past actions, and correcting its own errors. Furthermore, enterprise applications rarely rely on a single agent. Multi-agent coordination involves swarms of specialized agents—one for coding, one for testing, one for security review—working in parallel.

For infrastructure engineers, agentic AI explained simply means: “continuous, high-latency, stateful, and extremely compute-intensive loops.”

Why 2025 Server Architectures Are No Longer Enough

The infrastructure that powered the initial wave of Generative AI is struggling to keep up. Most 2025 architectures were optimized for stateless HTTP requests or single-shot inference. Agentic workloads are different.

Latency limitations in traditional cloud setups kill agent performance. When an agent needs to “think” (perform chain-of-thought reasoning), query a database, and call an external API in a tight loop, every millisecond of network latency compounds. A 50ms delay in a standard app is negligible; a 50ms delay repeated across a 20-step agentic workflow results in a sluggish, unusable product.

GPU bottlenecks are also shifting. It’s no longer just about having the biggest H100 cluster. It’s about how quickly you can load different models into memory as agents switch contexts. Network congestion and single-region fragility further exacerbate the issue. If your agents reside in US-East but need to interact with real-time data in Europe, the speed of light becomes your hard limit. Legacy server architecture simply cannot handle the distributed, chatty nature of these autonomous systems.

Compute Requirements for Agentic AI Workloads

To host agentic systems effectively, compute strategies must evolve from “always-on” to “instant-scale.”

Parallel processing is paramount. A multi-agent system might spawn ten sub-agents to research a topic simultaneously. Your infrastructure must support massive parallelization without queuing requests, which requires a scheduler far more aggressive than standard load balancers.

GPU density is another critical factor. High performance AI servers need to support not just training, but heavy inference loads that fluctuate wildly. You might need zero GPUs one minute and fifty the next. This requires GPU hosting for AI that offers fractionalization (splitting one GPU into multiple instances) or extremely fast cold-start times.

Crucially, low jitter performance is non-negotiable. Variance in processing time confuses agents that rely on timeouts and synchronization. A consistent 100ms response is often better for agent coordination than a response that varies between 20ms and 500ms.

Memory and Storage Demands of Autonomous Agents

The “brain” of an agent is its context window and its long-term memory. This places immense pressure on storage subsystems.

Agents rely heavily on vector databases to retrieve relevant information from vast datasets. These lookups happen constantly. High IOPS storage (Input/Output Operations Per Second) is essential to prevent the database from becoming the bottleneck. If the storage layer chokes, the agent freezes.

Furthermore, in-memory caching (using technologies like Redis or Memcached) becomes critical for maintaining the “short-term memory” or state of the agent. Vector database hosting solutions must be co-located with the compute resources to minimize retrieval time. The separation of compute and storage, a hallmark of cloud-native design, may need to be reconsidered in favor of hyper-converged infrastructure for specific high-performance storage for AI use cases.

Network Architecture for Real-Time AI Agents

In 2026, the network is the computer. For autonomous agents, low latency networking is the difference between a smart agent and a slow one.

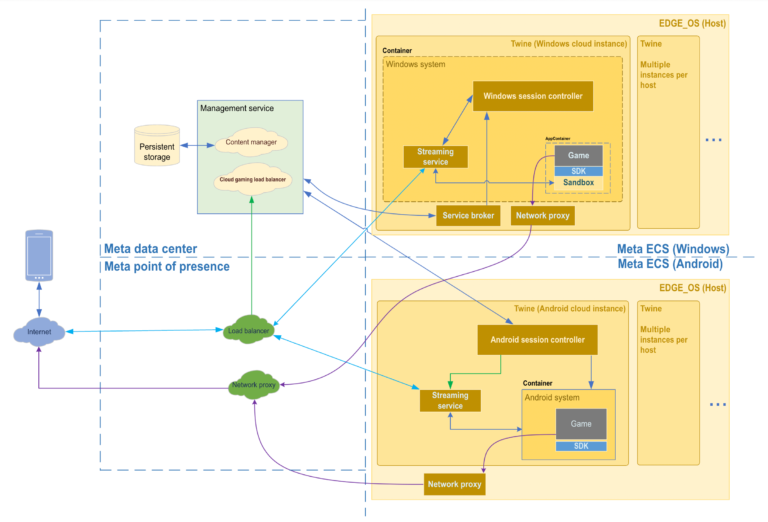

Traditional traffic flows were “North-South” (client to server). Agentic workflows generate massive East-West traffic (server to server, or agent to agent). Agent A talks to Agent B, who queries Database C, who talks back to Agent A. This internal chatter happens entirely within the data center.

Your network fabric must support bandwidth scalability that can handle gigabytes of context data moving between nodes instantly. High bandwidth cloud hosting with optimized internal routing protocols (like RDMA or InfiniBand) is becoming a standard requirement for low latency hosting for AI. Without this, the internal coordination overhead consumes more time than the actual AI processing.

Orchestration and Scaling Challenges

How do you manage software that decides when to run itself? Kubernetes limits are being tested by agentic workloads. Standard autoscalers react to CPU load, but agents might be waiting on an API call (low CPU) while still consuming massive amounts of memory.

Agent lifecycle management requires a new breed of orchestration. We need platforms that understand the “intent” of the agent. If an agent is midway through a complex task, you cannot simply kill the pod to scale down. Autoscaling policies must be state-aware.

To scale autonomous agents, engineers are turning to specialized AI orchestration platforms that sit on top of Kubernetes. These platforms manage the intricate dance of spinning up ephemeral environments for agents to work in, ensuring they have the right tools and permissions, and shutting them down gracefully when the task is complete.

Observability and Reliability Requirements

Debugging a standard application is hard. Debugging a non-deterministic agent that made a decision five steps ago is a nightmare without proper tools.

Telemetry must go beyond CPU and RAM. We need to track “tokens per second,” “tool usage success rates,” and “reasoning trace logs.” Failure recovery is also complex. If an agent crashes, does it restart from scratch, or can it resume from its last “thought”?

Cost monitoring is the final piece of the puzzle. An autonomous agent left unchecked can burn through an API budget in minutes. AI observability tools must provide real-time cost anomaly detection to monitor autonomous agents and kill runaway processes before they bankrupt the department.

Security and Governance for Agentic Systems

Giving software autonomy introduces significant risk. Identity management for agents is a new frontier. Each agent should have its own identity, with least-privilege access. An agent designed to summarize emails should not have permission to delete database records.

Data isolation is critical, especially in multi-tenant environments. You cannot risk Agent A leaking context to Agent B. Audit trails must be immutable and comprehensive, recording not just what the agent did, but why it decided to do it (by capturing the reasoning chain).

A robust AI security architecture includes “human-in-the-loop” checkpoints for high-stakes actions. Autonomous AI governance isn’t just a policy document; it’s a set of hard constraints baked into the infrastructure layer.

Reference Architecture for Agentic AI Hosting (2026)

So, what does the solution look like? The ideal agentic AI architecture for 2026 is a hybrid beast.

It likely involves hybrid bare metal + GPU clusters for the heavy lifting (training and heavy inference), combined with serverless logic for the orchestration layers. Edge deployment plays a role for latency-sensitive agents that interact with users in real-time.

Data pipelines are tightly integrated, feeding real-time context into vector stores that sit directly next to the inference compute. This AI infrastructure blueprint moves away from general-purpose clouds toward specialized, AI-centric clouds designed for high throughput and low latency.

Migration Path from 2025 Infrastructure to 2026-Ready Architecture

You cannot rebuild everything overnight. The path to modernization starts with a gap analysis. Identify where your current latency lies and which components are blocking agent performance.

Adopt a strategy of phased upgrades. Start by moving your vector databases to high-performance storage. Then, upgrade your orchestration layer to support stateful, long-running processes.

Risk mitigation involves running 2026-ready pilots alongside your legacy stack. Don’t migrate your critical customer-facing chatbot first. Start with internal data analysis agents to test the limits of your new upgrade AI infrastructure plan. The goal is to modernize server architecture iteratively, not destructively.

Business Risks of Delaying Infrastructure Modernization

The cost of inaction is high. Downtime in an agentic world means your digital workforce stops working. Cost overruns from inefficient, unoptimized infrastructure can destroy the ROI of AI initiatives.

Most importantly, there is the risk of competitive lag. Competitors who build on a 2026-native stack will run faster, smarter, and cheaper agents. They will automate complex workflows that you cannot touch. Delaying infrastructure modernization is a strategic error that may be impossible to recover from.

FAQ – Agentic AI Hosting (High-Intent SEO)

Q1: What is agentic AI hosting?

Agentic AI hosting refers to specialized infrastructure designed to support autonomous AI agents. Unlike standard web hosting, it prioritizes low-latency networking, high-performance GPUs, vector database integration, and stateful orchestration to handle the complex, multi-step reasoning loops of autonomous software.

Q2: Why can’t traditional cloud servers support autonomous agents?

Traditional servers are optimized for stateless, short-lived requests. Autonomous agents require long-running sessions, massive parallel processing, and high-speed communication between multiple agents (East-West traffic). Legacy architectures suffer from latency issues and cold-start delays that make agentic workflows slow and unreliable.

Q3: What hardware is required for agentic AI workloads?

Key requirements include high-density GPUs (like NVIDIA H100s or B200s) for inference, high-IOPS NVMe storage for vector database lookups, and high-bandwidth networking (InfiniBand or similar) to reduce latency between compute nodes.

Q4: How do you scale autonomous agents safely?

Scaling requires an AI-aware orchestration platform that goes beyond standard Kubernetes metrics. It involves state-aware autoscaling, robust identity management to prevent unauthorized actions, and real-time cost monitoring to prevent runaway agent spending.

Q5: Is GPU hosting required for agentic AI?

Yes, for most enterprise use cases. While some simple logic can run on CPUs, the inference required for the Large Language Models (LLMs) that power agents requires the parallel processing capabilities of GPUs to function at acceptable speeds.

Q6: How much does agentic AI infrastructure cost?

Costs vary wildly based on usage. However, agentic AI is generally more expensive than standard generative AI due to the “agent loop”—a single user request might trigger 50 internal inference calls. Cost optimization requires specialized infrastructure that can scale to zero and use spot instances effectively.

Conclusion

The era of static software is ending. As we move toward 2026, the organizations that succeed will be those that view infrastructure not as a commodity, but as a strategic enabler of autonomy.

Your 2025 architecture served you well for the era of the chatbot, but it will buckle under the weight of the digital workforce. The demands for latency, memory, and parallel processing require a fundamental rethink of how we build and host systems.

Don’t let your infrastructure be the bottleneck that holds back your AI innovation. Audit your current stack, identify the gaps, and begin the migration to a high-performance, agent-ready environment today.